Oct 13th, ‘22/5 min read

Why MTTR should be a ‘business’ metric

One of the many pitfalls of friction between engineering and business is the lack of fundamental measurements on the health of engineering. But how does business measure engineering efficacy, and how does engineering posit its standing to business?

Engineering culture is not such an abstract concept as it’s made out to be.

Among all the inevitable entropy that comes with org design, choosing a stack, build-vs-buy, and structuring teams, the seemingly humdrum choice of a metric to measure the efficacy of engineering is always on the back burner. This has obvious cultural repercussions which manifest in weird and interesting ways. 😉

But, here’s the indelible truth:

💡 The management of engineering health is stuck in the stone age.

Think about it: In how many board meetings do “software-driven”, “engineering-first” companies report ‘uptime’ as a business metric? How is software health even evaluated? While sending investor updates, do companies report on any operational metrics from engineering? These are hard questions to be asked not just to management, but also to engineers who want their seat at the table.

Questions from business to engineering usually revolve around:

- Was this release done on time?

- By when will this feature be released?

- How are we keeping costs, capacity and performance under check - (which by the way, in itself is opaque)

- How are we doing on hiring?

This is so primitive, it’s embarrassing. To be clear, this is not just a business problem. How many engineering leaders speak the language of management? How much air cover is being built for teams that work round the clock to build products, and features, focus on systems health, reduce MTTRs (Mean Time To Recovery) et al?

While several metrics help address this gap, I believe one of them stands out:

💡 MTTR.

Surfing the chaos of Conway’s Law

Being able to understand, develop and maintain your services and infrastructure gets a lot harder with scale. Correlations die as complexity grows. As companies scale first to dozens, then hundreds or even thousands of servers, the burden of keeping everything running falls on teams of engineers that depend almost entirely on tribal knowledge to do so.

As the product continues to scale and customer-facing teams continue to add features at the behest of sales, marketing, or ‘the business’, product reliability starts to tank. Customers find it harder and harder to use the product as sub-systems randomly fail, breaking crucial workflows and bleeding engagement and revenue. Since these failures are ephemeral and cause unpredictable and unreplicable effects, traditional bug-tracking processes - when they exist - fail to address them.

The wider organisation remains oblivious until the outages become severe enough that the product goes down completely.

By then it’s often too late - the systems that power the product are poorly understood and poorly documented. Nobody can pinpoint exactly when sub-systems fail and why.

Accountability becomes structurally infeasible because of the chaos.

The wider organisation responds by demanding the problem be fixed. “Let’s hire more engineers to get this fixed!” become the rallying cry. But more engineers can fix this because the problem isn’t one of staffing, it is one of knowledge and communication. If anything, adding more engineers to the mix makes the problem worse, not better.

Once we frame the problem as being a consequence of the chaotic nature of building and scaling products, MTTR becomes a definitive metric in seeking a solution. MTTR as a metric helps businesses understand engineering better, as the MTTR reported by every team for the systems they own is a clear, reliable proxy for both quality and ownership. It also gives engineering leaders a critical axis to assess where the weak links in the wider system are, and which sub-systems and teams need their attention most.

But, what does this really mean?

By owning and reporting MTTR, teams have no choice but to be accountable for the reliability of the code they write. This dramatically changes the culture of engineering.

“If you’re not on call for systems you take down, you have no skin in the game when it comes to keep them up.”

As a team, if you want to lower your MTTR, you have no choice but to nip tribal knowledge in the bud, have better documentation, robust quality control practices, and re-think intra and inter-team communication from ground-up.

For engineering leaders, org design becomes critical. And this is not just about people, and how they interact with teams. It’s about software choices, language choices, creating a prioritization framework for features, goal setting on velocity et al.

Ex: A practice from Last9 I love: 2 day auto-delete messages on personal DMs in slack, because you want to control the spread of tribal knowledge; forcing engineers to talk on public slack channels. (Full disclosure: I'm an investor in Last9)

In short, a direct application of Conway’s Law, which states that “Organizations, who design systems, are constrained to produce designs which are copies of the communication structures of these organizations.”

And since software will fail, MTTR times act as a strong proxy to gauge org resiliency, team structures, and overall health.

💡Above all, org bias is laid bare, because it’s measurable. As of now, none of that is visible for business apart from the usual randomised vanity metric on uptime, features, and time taken to ship new code.

MTTR > Uptime

Uptimes give you absolutely no actionable goals to attack. The understanding of uptimes start with acknowledging the inevitability of downtimes. When this thinking percolates into business, things get a lot clearer.

MTTD (Mean Time To Detect) - a contributing metric to MTTR - should be an internal engineering metric for every team. This ultimately funnels into MTTR for businesses to gauge engineering.

The only way to improve uptimes is to drive MTTR actionables. MTTR is entirely about actionables:

“Hey, we are running at about 6 hours of MTTR for the last 5 outages. Of these, were averaging about 3 hours to figure out that a problem occured, now how about we get this down to 30 minutes.” - Affirmative action deduced from framing MTTR.

The analysis of looking at past failures and deducing where most time was spent gives a cohesive picture of where engineering needs to spend its time. Identifying and isolating these issues in a micro services world brings in engineering resiliency over time.

By driving MTTR metrics as a north star, it forces discipline on engineers. You can’t throw your code over the wall and expect DevOps to sort out issues. You have to own the MTTR of your own code.

💡 Driving MTTD internally, and reporting MTTR as a business metric helps build anti fragility in engineering.

Let’s take a step back to process this. Why are engineers so critical to an org?

When the shit hits the fan, orgs depend on engineers to debug and explain what went wrong. Think about that for a moment. Let’s break down the value chain:

- Juhi is irate that her food order failed.

- Juhi posts on twitter that Company A sucks.

- Customer Support (CS) pings Juhi to resolve the issue.

- Whatever CS needs, sits with an engineer.

The engineer has to support what went wrong and provide a definitive answer.

💡 If this happens at scale, the entire org is blindsided. An entire org is dependent on engineering to debug and ‘fix’ issues for business continuity.

All this chaos flows down into one function: Engineering. Given the onus of responsibility, and the inevitability of failure, MTTRs > Uptime to measure engineering health. This is why engineering org design becomes important; because business survival depends on how effective engineering is. But as you build your reliability journey, product, customer support and other functions should have a better understanding of reliability in the org. Deprecating MTTR dependencies start by empowering teams across the orgs with MTTD tooling.

Imagine when Customer Support is able to detect system failures before a customer points it out, or without any help from engineering? This creates resilience in an org’s reliability mandate. This entire process starts by getting more accountability from engineering, and MTTR is that one ignored metric that binds the team. ✌️

Oh, and to wrap it all up:

Orgs that have the ‘capacity to suffer’ in the short term because they invest into communication at the cost of building product, are anti fragile in the long run.

Bouquets and brickbats are welcome. I'm reachable on twitter: https://twitter.com/ponnappa

Want to know more about Last9 and our products? Check out last9.io; we're building reliability tools to make running systems at scale, fun, and embarrassingly easy. 🟢

Contents

Newsletter

Stay updated on the latest from Last9.

Handcrafted Related Posts

When should I start thinking of observability?

How does one scale metrics maturity in a cloud-native world — A guide on observability tooling as your engineering org scales.

Piyush Verma

Why we auto-delete slack messages - killing tribal knowledge at Last9

At last9, we auto-delete slack messages after 2 days on all personal Direct Messages. These retention policies force teams to improve documentation, kill tribal knowledge and drive accountability for mistakes, errors.

Nishant Modak

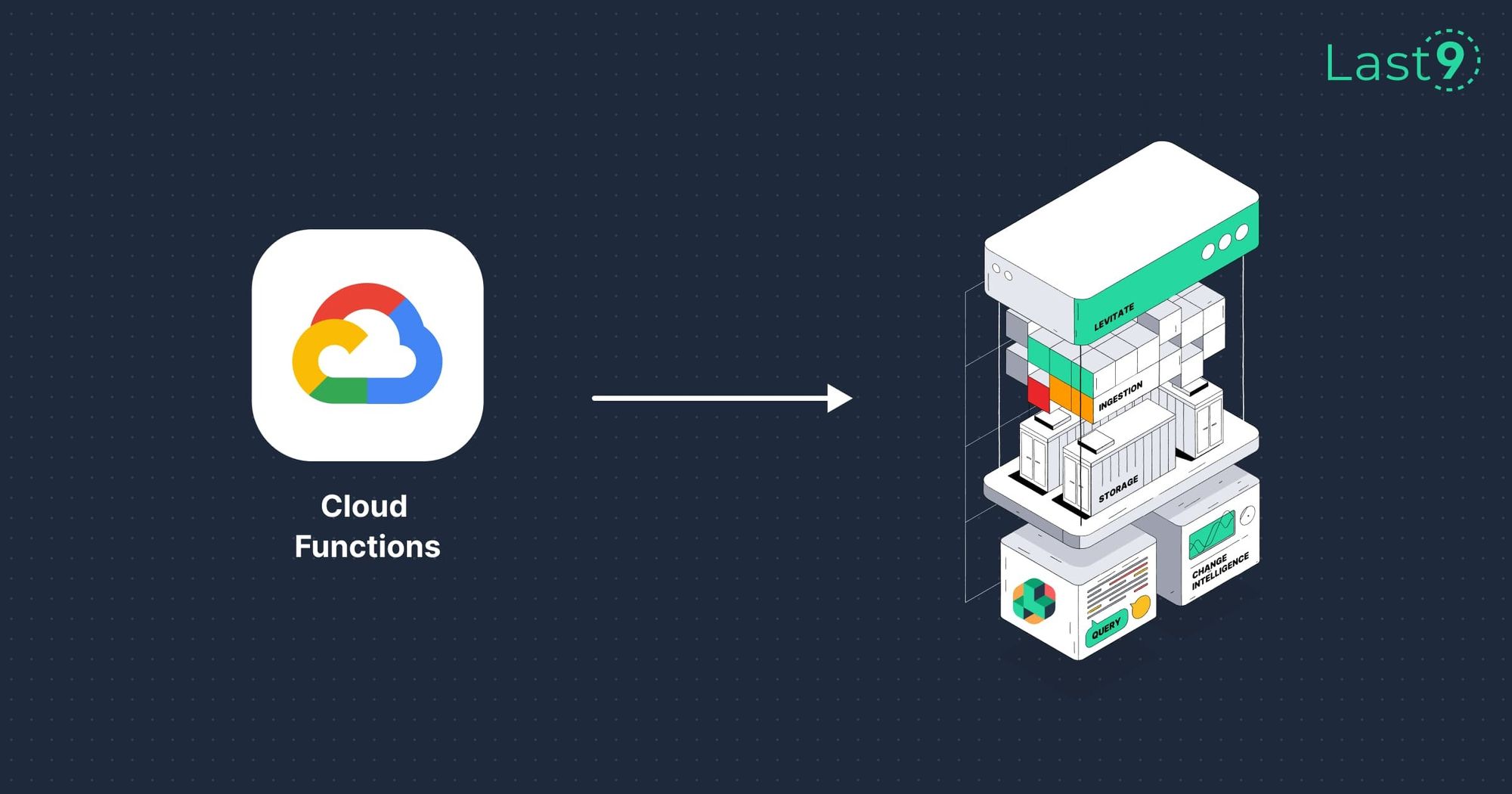

Monitor Google Cloud Functions using Pushgateway and Levitate

How to monitor serverless async jobs from Google Cloud Functions with Prometheus Pushgateway and Levitate using the push model

Aniket Rao