Dec 2nd, ‘21/5 min read

Deeper Dive into SLO: Effects on Development, Culture and Performance

Thanks to Service Level Objectives (SLOs), your teams have a numerical threshold for system availability, so everyone has a clear vision of what keeps the users and the business happy.

A system's ability to fulfill its intended function at a point in time (also known as availability) is crucial to the success of SRE. It's almost impossible to deliver an "always-on" service that users can rally behind without understanding and evaluating specific behavior patterns that make for a smooth-sailin system. Thanks to Service Level Objectives (SLOs), your teams have a numerical threshold for system availability. Everyone has a clear vision of what keeps the users (and, consequently, the business) happy.

SLOs can help answer questions like:

- When considering reliability vs. features, how do we avoid compromising one over the other?

- How can we continue releasing new features without jeopardizing system availability without diminishing user experience?

- What's the most effective way of balancing operational vs. project/product work?

In a nutshell, SLO acts as a data-driven decision framework for development, operations, and product teams, navigating meaningful conversations about system architecture, service design, and feature releases.

Let's take a look at the many good things that come out of establishing SLOs for your company:

SLOs can provide the much-needed buffer

Since SLOs are supposed to be stricter than any external-facing agreements (for example, SLAs) that you might have with your clients, they ensure that any problems or concerns are addressed long before the user experience starts deteriorating. For example, your client agreement states that your services are available 99% of the time monthly. You can introduce an internal SLO where the team receives an alert whenever availability falls below 99.9%. As a result, you have a reasonable time buffer to fix an issue and avoid violating the agreement.

- Service Level Agreement with a client: 99% availability (this gives an acceptable downtime of 7.31 hours per month)

- Service Level Objective set internally: 99.9% availability (this gives an acceptable downtime of 43.83 minutes per month)

- Safety Time Buffer: 6.58 hours

When you have over six and a half hours between your internal Service Level Objectives and client agreement breach every month, you will have peace of mind as you pace your deployment process.

From a management perspective, setting an SLO of 100% can be very tempting but highly unrealistic. With an SLO of 100%, your development process will be forever paralyzed by fear that even the slightest, most trivial change can result in an SLO breach. "Setting an appropriate SLO is an art in and of itself, but ultimately you should endeavor to set a target that is above the point at which your users feel pain and also one that you can realistically meet," advises Garrett Plasky, former Head of the Evernote's SRE team. Your SLOs should not be aspirational.

SLOs can help combat alert fatigue.

Measuring everything is impossible, given how large and complex most companies' technology infrastructure can be. However, you must identify what metrics matter the most if you want to set meaningful goals for your team.

Engineers usually solve system failures or even an indication that something might be broken by setting alerts. Over time, even the most minor issue leads to an alert, which could quickly result in multiple alerts. Over-alerting leads to chaos and a drastic reduction in productivity. Leaning heavily on pager alerts, which could go up to clusters of tens to hundreds of alerts, only drives alert fatigue.

Over-alerting is never the answer. Nobody likes waking up at 3 am to a pager alert only to find out the alert was about a couple of failed logout requests. SLOs can help prioritize which alerts are worth attending to immediately. Suppose you carefully reflect on deliberate objectives, link the SLOs to use cases that impact the user experience or cause business loss, and only set alerts on critical issues. In that case, you can naturally pare down hundreds of alerts and signals to only a few that matter.

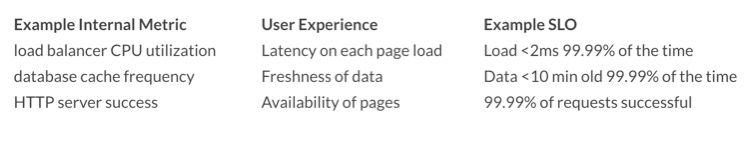

Of course, you're now thinking about how to choose these indicators accurately. Even though good load balancing might impact latency experienced by a user, it's still several steps away from a user's experience. Many metrics contribute to latency and trying to ensure your SLO caters to them all will cause too much noise. Instead, you can consolidate typical user experiences into metrics that are directly applicable to their happiness:

Moreover, you can also create alerting and response workflows for incidents that don't directly impact SLOs. This might allow better prioritization and reduced alert fatigue.

SLOs can improve development agility.

Most SREs and other team members involved in operations might see the importance of having a safety net during downtime. However, developers may find it challenging to commit to the idea. The good news is that everyone can rally behind the many incentives of error budgeting. For example, you're implementing a new change that might reduce the average load time by several milliseconds (ms), but the implementation can cause brief outages.

If you believe that "all outage episodes are unacceptable," it might be challenging to justify making this change. Error budgeting offers you a justifying metric and encourages your team members to take such risks when required. Adopting an SLO and error budget-based engineering practice can enable your team to strategically use the budget every month, whether it's for new feature launches or system-architecture changes. This allows you to innovate quickly without compromising reliability or user experience.

For developers, SLOs should provide an opportunity to maximize product quality and innovation speed. Even the overhead generated from meetings to refine SLOs should be a chance to accelerate development efforts. As long as SLOs are routinely being met, you can relax them in favor of higher error budgets to afford more freedom to your development team.

The Cultural Impact of SLOs

We've already discussed how SLOs can encourage risk-taking in developers and offer some peace of mind to the SREs. However, their true power lies in the cultural message behind them. Failures are inevitable. Your internet provider or the power grid cannot promise 100% availability. You inevitably set yourself up for failure when you hard-set and push for 100% availability.

The best tech companies, including Google and Facebook, embrace failure as the norm. Only when you truly believe that setting processes around and allowing your teams the buffer time in case an incident does happen can you drive for better availability.

Nevertheless, the data produced from consistent SLO usage can come in handy for guiding leadership teams, making investment decisions about engineering, and improving software reliability. For example, product road mapping decisions can become much more high-quality by measuring performances against SLOs. Product and engineering managers can use SLO performances to prioritize engineering resource allocation objectively.

In the End

Finding the right balance between investing in new features to attract new customers and investing in system reliability to keep your existing customers happy isn't easy. Many technology conglomerates, including Google, rely on well-thought-out SLOs to make informed decisions about the opportunity cost of reliability vs. features and how to appropriately prioritize the work that goes into both. After all, an SRE is responsible for more than just holding the pager and automating everything.

By using SLOs, you're setting a target level of system reliability for users. Your team members can then mathematically identify a relationship between a measurable technical metric (such as latency) and a key business metric (such as conversions).

Remember, defining SLOs should not be a one-time activity. As your product evolves, your user expectations will, too. Reviewing and updating your SLOs periodically is essential so you can pinpoint actionable remedies when your services fail to deliver.

Contents

Newsletter

Stay updated on the latest from Last9.

Handcrafted Related Posts

Levitate - Last9’s managed TSDB is now available on the AWS Marketplace

Levitate - Last9's managed Prometheus Compatible TSDB is available on AWS Marketplace

Prathamesh Sonpatki

Streaming Aggregation vs Recording Rules

Streaming Aggregation and Recording Rules are two ways to tame High Cardinality. What are they? Why do we need them? How are they different?

Last9

QCon New York 2023 Recap

Recap of QCon New York 2023 Conference

Prathamesh Sonpatki