Jul 12th, ‘20/7 min read

Root Cause Analysis For Reliability: A Case Study

Let's explore the importance of RCAs in Site Reliability Engineering, why use RCAs, and our take on what constitutes a “good” RCA.

Wikipedia defines Root Cause Analysis (RCA) as “a method of problem-solving for identifying the root causes of faults or problems.” Essentially, root cause analysis means diving deeper into an issue to find what caused a non-conformance. What’s important to understand here is that Root Cause Analysis does not mean just looking at superficial causes of a problem. Rather, it means finding the highest-level cause- the thing that started a chain of cause-effect reactions and ultimately led to the issue at hand.

Root cause analysis methodology is widely used in IT operations, telecommunications, the healthcare industry, etc. In this post, I’ll take you through how to use RCA to make your system more reliable using an experience I had.

However, before discussing the case study, a note on the importance of RCA in Site Reliability Engineering.

Why should you use Root Cause Analysis for Reliability?

A good Root Cause Analysis looks beyond the immediate technical cause of the problem and helps you find the systemic root cause of the issue. And then you work on eliminating it. RCA, along with documenting the core issue and steps to resolve it – also lends itself to the very next question an SRE will ask – is this happening elsewhere? Automating the solution and deploying it to affected areas ensures that the problem’s root cause is not only taken care of but also problems of a similar nature are permanently fixed.

That being said, a not-so-thorough RCA makes the entire process futile. Here’s an incident I encountered a few years back and how my team went about doing a Root Cause Analysis for it.

How Things Went Down

Elasticsearch wakes up Pagerduty wakes up Oncall

It was one of those times when I thought everything was in order and working fine. And yet, a small slip led to an unexpected breakdown. It was around 7:30 AM, almost 25 hours before a country launch, and PagerDuty went off. Buzzers kept going on that something was breaking. So, we went to Elasticsearch and found five or six 5XX requests.

Pagerduty auto resolves – good news or bad news?

There were a lot of logs coming in around 1 Mbps. There wasn’t a correlation ID, so we couldn’t actually isolate from the tons of logs where the issue was exactly happening. Precisely 5 minutes later, the HTTP 500 error stopped, and Pager duty auto-resolved. We thought it was probably not a major issue and de-prioritized it.

Pagerduty wrath unleashes in rinse-repeat mode

Five minutes later, exactly sharp by the clock, Pingdom starts alerting PagerDuty again that the public API is unreachable. We had set up all the tools we could think of. There was Grafana, which was sending us some 5xx requests. The alerts went to Sentry through the standard route Elastisearch> Elastalert> Sentry. The issue keeps going on until exactly 7:45 AM, five minutes later. Five hundred stops, and the Pagerduty is auto-resolved. This keeps repeating.

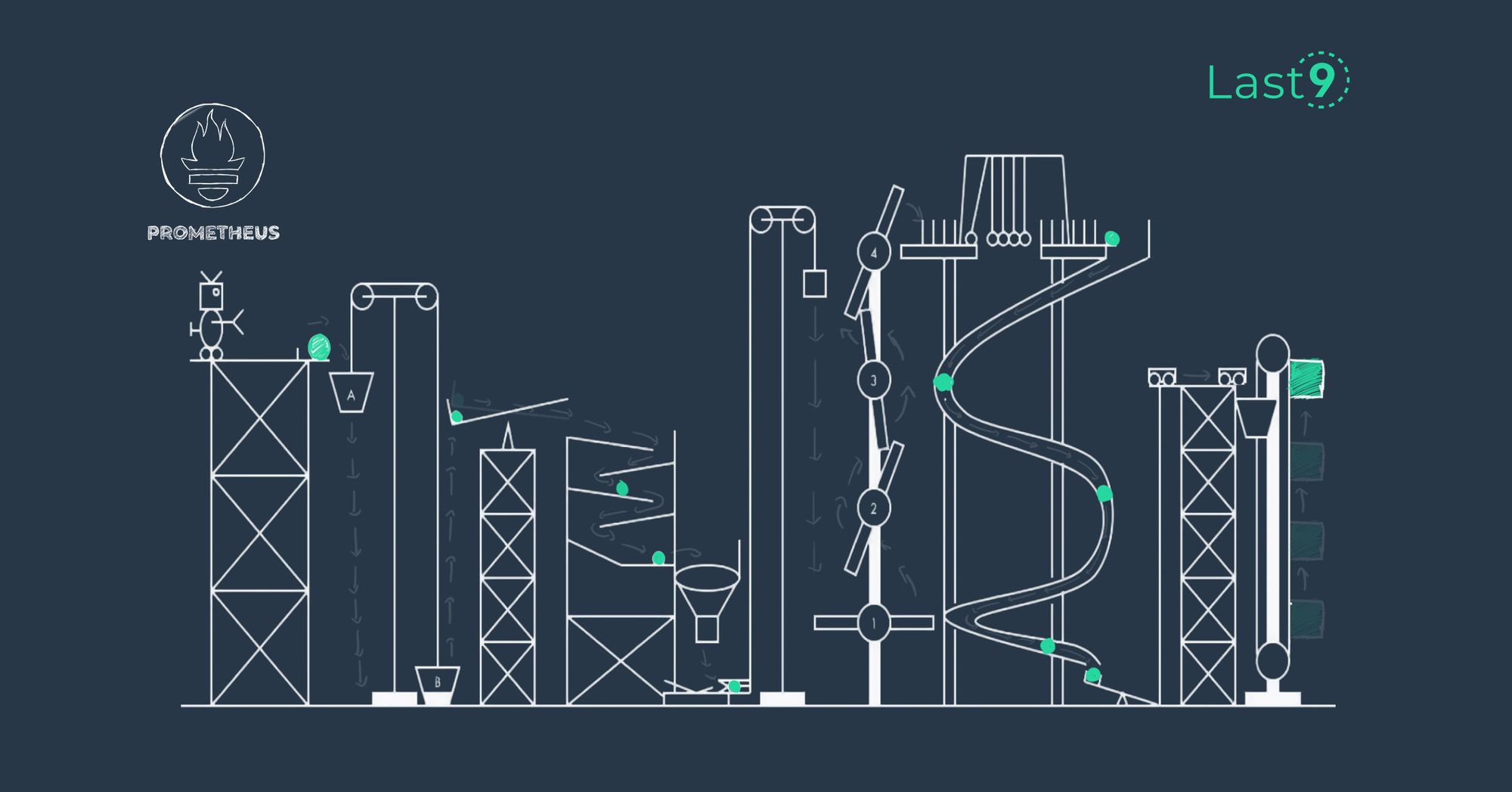

Our first thought was whether the issue was due to a new deployment. It’s often the easiest answer to these problems. The release manager, as well as the on-call SRE, deny any new deployment. Not only that, but all other tools were doing okay. Grafana looked okay, Sentry was doing its job, and Prometheus and APM were doing okay. We even checked the firewall to determine whether there was a drop in traffic, but there wasn’t.

Tired of never-ending alerts? Read our blog on alert fatigue to understand how Levitate tackles this problem of alert fatigue and false positives.

a simple mount command

After nearly 20 hours of struggling to find the problem, we realized that a simple mount command hadn’t run on one of the database shards. And because of that, data was being written in memory. When the system rebooted, data was wiped. This machine wasn’t supposed to be commissioned, but we had fixed the machine. So we put it into circulation.

However, Ansible did not run one of its commands as it should have.

So one machine was left out, one command did not run and the machine rebooted.

We noticed here that only a certain section of the data was gone. So, only a certain type of request started failing, which disconnected the faulty node from the load balancer as an unhealthy upstream.

Now that we had figured it out, how to fix this. But before going there, two more important questions need to be answered:

- If there’s a mistake in one place, it’s quite likely the mistake was repeated in other places. Where else was this failing?

- How am I going to avoid the situation ever from happening again?

Systems are designed by culture.

Digging Deeper: The Root Cause Analysis

We found out that this was a gap in our first bootstrap run by Ansible. We used Ansible to run commands. But when the (faulty) machine was brought into circulation, one of the Ansible runs didn’t happen. It was a newly provisioned machine, and service discovery did not pick it up.

We were using Nomad to schedule dynamic workloads. This meant requests could go to any of the deployed machined, faulty ones.

We could have gone old school, used none of these, and avoided the situation. But that wasn’t really an option. We had dynamic workloads and needed a clustered scheduler like Nomad.

But our RCA didn’t end there. A good root cause analysis identifies the business loss as well. Was it data loss? Did it cause a significant amount of embarrassment, if not financial loss? Was there financial loss that couldn’t be measured because the system was down?

Probable Root Cause 1: Raghu forgot to execute the mount command

A common outcome of RCA is that we end up blaming individuals. We did mention this: Raghu forgot to execute the mount command. What we must realize is that individuals cannot be reasons for failures. So, the conclusion of our RCA cannot be that an individual failed to do something. It must be more actionable and concrete.

Probable Root Cause 2: The infrastructure team forgot to execute the mount command

We took another stab at it. We said that the infrastructure team forgot to execute the mount command. But this RCA, too, is not actionable.

Is improving the Infrastructure Team’s memory a probable resolution?

Another thing to consider with the above two causes is the bad apple theory. Change the existing team or replace the team members? The Bad Apple theory states that if you remove, a bad apple from a basket, what remains is a basket of really good apples. Most of the time changing people doesn’t really solve anything. We have to be pragmatic and cognizant of the fact that skill and ownership always go hand in hand. You have to have the right skills in people and you have to give them the right ownership along, with the right tools. This solution would, therefore, not have achieved the right results.

Human failures are inevitable.

Probable Root Cause 3: Remote Login via SSH Allowed

We then went a step deeper and asked the question: Why was it possible to SSH into the system? If SSH was the issue, a probable resolution could be not to allow SSH. But that would actually hinder work.

There’s a reliability team, a DevOps team, and a development team working on the system. They are invariably going to SSH into the system.

Preventing somebody from doing their job is not going to make the system reliable.

Probable Root Cause 4: Right Tools Were Not Present

We then asked another question: why don’t we have a tool that actually matches alerts on a configuration mismatch? What if all my systems in production had a way to match their configurations and raise an error every time there was a mismatch? That would be the best thing, right?

This way, people are free to automate. Are not burdened. And can actually make decisions freely because the mundane work of configuration validation has been offloaded to a computer. There will be mistakes as long as humans are in charge of the system.

So, the real issue here was that we didn’t have the right tools to catch the smaller details a human could easily overlook.

Anything that you’re not paying attention to is going to fail at some point or the other.

The final root cause analysis

The system configuration validator was absent

There was no system to actually validate the state of the machines every now and then.

Solution: We built it. It became one of our bread-and-butter tools. Every time we had a launch, we actually started running those configuration validators to see if everything looked okay. We could check if all ports could scan all machines and the connectivity was fine. And the scope kept increasing. One of the greatest tools that fit here is osquery.

It is a great tool by Facebook where you can actually make SQL queries to systems. Then, you can match them across systems and see which ones are working fine, if there’s any difference, etc.

FMEA did not exist

Failure mode effective analysis is a slightly outdated term borrowed from the industrial sector. The cool kids these days call it chaos engineering. FMEA means we run an application in Failure Mode and see its impact. If we could run these systems against failure scenarios in our test environments by using fault injection techniques, we would probably have caught the issue.

Non-Latent Configuration Validator was not available

Non-latent configuration validation is an approach that advocates early detection of invalid data to ensure fast failure and prevent the situation where an invalid configuration causes bigger damage. For example, if a bad URL is detected during system initialization, there’ll be far less damage than if a customer workflow invokes it.

We realized that a non-latent configuration validator was missing.

Research shows that 72% of the failures could have been reduced if configuration validation was not latent!

These three we identified as the root cause of this situation. When we rectified these, that is, when we added the necessary tools, we did not encounter similar issues again.

That’s the power of a strong RCA. And to do it, you need to look beyond individuals, teams, and superficial causes and find actionable solutions.

Still having second thoughts about subscribing to our newsletter? Maybe this post on how number-crunching horses relate to SRE tooling will change your mind. Also, did we mention that we are hiring to build the next-gen SRE observability platform? If you are interested, reach out to us on Twitter.

Contents

Newsletter

Stay updated on the latest from Last9.

Handcrafted Related Posts

Monorepos - The Good, Bad, and Ugly

A monorepo is a single version control repository that holds all the code, configuration files, and components required for your project (including services like search) and it’s how most projects start. However, as a project grows, there is debate as to whether the project's code should be split into multiple repositories. In many cases, monorepos are still useful since they are very effective at managing projects with a lot of individual components. They also ensure that anyone working on a p

Prathamesh Sonpatki

Troubleshooting Common Prometheus Pitfalls: Cardinality, Resource Utilization, and Storage Challenges

Common Prometheus pitfalls and ways to handle them

Last9

How to improve Prometheus remote write performance at scale

Deep dive into how to improve the performance of Prometheus Remote Write at Scale based on real-life experiences

Saurabh Hirani